Research topics

Overview

The rate of solid state syntheses is essentially induced by the diffusion rate of the educts through the different phases. High reaction rates are supported by high temperatures und high pressures. Furthermore, to work with easily decomposing metal oxides like silver und gold oxides at high temperatures requires an oxygen partial pressure, which is higher than the decompositon pressure upon the involved compounds. The unique structural features of silver-rich silver(I) oxides were the earliest experimental evidence suggesting attractive d10-d10 interactions between monovalent silver atoms. In spite of bearing equal positive charges, the silver atoms in these compounds interconnect forming cluster-like ensembles corresponding to sections of the element structure of silver.

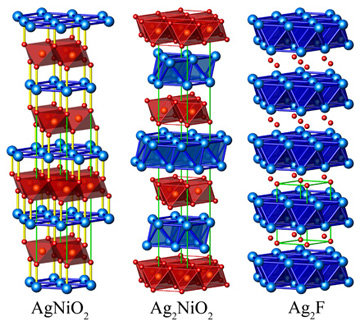

Ag2NiO2Ag2NiO2 has been prepared by the solid-state reaction of Ag2O and NiO under high oxygen pressure. Ag2NiO2 is a lustrous black solid and insensitive to air and water. Conventionally, the oxidation states +1 and +2 would be assigned to silver and nickel, respectively, which correspond to the valence distribution in the homologous compound Ag2PdO2. These assignments, however, severely conflict with the structural features, magnetic moment, and spectroscopic properties found in Ag2NiO2. Instead, all the experimental findings are consistent with a charge distribution of [Ag2]+[NiO2]-. Surprisingly, the new silveroxonickelate Ag2NiO2 contains subvalent silver. Only a few examples such as Ag2F, Ag3O, Ag5SiO4, Ag5GeO4, and Ag5Pb2O6 have been prepared to date. The properties range from metallic behavior in Ag2F, Ag3O, and Ag5Pb2O6 to semiconducting in Ag5SiO4 and Ag5GeO4. There are striking similarities with the delafossite structure on the one hand as well as with the silver subfluoride Ag2F on the other. In delafossites of the composition AgMO2 (M=trivalent transition metal ion), MO6 octahedra are linked by their edges to form cadmium chloride analogous layers. The NiO2 slabs are separated by two staggered hexagonal silver layers. This feature corresponds to the silver substructure of Ag2F where the silver atoms also form layers of edge-sharing octahedra. The in-plane silver-silver distances are 2.926Å and thus similar to those in elementary silver (2.889Å). The smallest distances between adjacent silver atoms of subsequent layers are even shorter at 2.836Å. Since this short distance is not enforced by structural constraints, it must be directly attributed to chemical bonds between the silver atoms. As there is only one crystallographic silver position and silver has an oxidation state of +1/2, at least partial metallic character can be attributed to this bond. Indeed, Ag2NiO2 is a good metallic conductor with a specific resistance of 2.18×10-4cm at 298 K. This situation is comparable to the electric transport properties of Ag2F and Ag3O. |

|

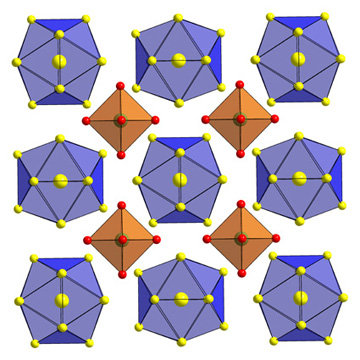

Ag13OsO6Ag13OsO6 is a new subvalent silver compound containing exclusively to silver bond silver atoms through interconnected icosahedral Ag134+ clusters and dispersed [OsO6]4– octahedra. It can best be obtained through two synthetic routes. First by the reaction of the elements in the presence of water as a mineraliser in gold ampoules and second by the solid state reaction of silver(I)oxide and elemental osmium. Both reactions have to run at an elevated oxygen pressure. The crystal structure consists of [OsO6]4– octahedra and icosahedral Ag134+ clusters arranged as in the CsCl-structuretype. Although the [OsO6]4– octahedra are perfect, the site symmetrie of the single Os cation is not Oh but only O. This is mostly due to a turn of the squares around the cubic axes, which leads to a loss of the mirror planes. The Ag134+ clusters build up of a silver centered silver icosahedra with inter nucleus distances of 279 pm which are closer than these in elemental face-centered cubic (fcc) silver. Ag13OsO6 is a metallic conductor with a specific resistance of ρ = 2.23∙10–4Ω cm at 298K with a positive temperature coefficient of 0.77 μΩcmK–1, and is diamagnetic. Neither the structural data nor the physical properties allow for an unambiguous determination of the oxidation state of Os. LMTO calculations show that the charge state of the octahedron may be considered as [OsO6]4–, and that of the icosahedron as Ag134+. Since the occupied bands have stronger Os character, the notaion Os8+O62– should be avoided. |

|

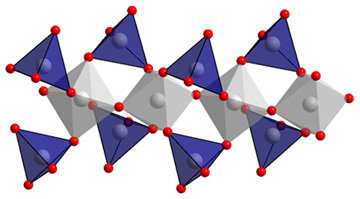

AgAsO3The silver meta-arsenate(V) AgAsO3 contains a new complex polyanion according to [(AsO6/2)(AsO2/1O2/2)2]. Like the Niggli formula shows there is four- and sixfold coordinated arsenium(V) present. The AsO6 octahedra are sharing two trans-vertices to form chains. The according to this chain end-on oxygen atoms are sharing vertices with the tetrahedra in that way, that one tetrahedra is linking two octahedra. These one-dimensional anions are running along [010] and are packed hexagonally with the silver cations in between. AgAsO3 can be obtain out of recent precipitated or stored under an inert atmosphere Ag2O and As2O3 in gold or corundum crucibles under high oxygen pressure. To avoid the formation of Ag3AsO4 no mineraliser like H2O is allowed to be added. Thermal investigations showed no changes up to 650°C. |

|

- U. Wedig, P. Adler, J. Nuss, H. Modrow, M. Jansen: Studies on the electronic structure of Ag2NiO2, an intercalated delafossite containing subvalent silver, Solid State Sciences 8 (2006) 753-63

- S. Ahlert, R. E. Dinnebier, M. Jansen: The Crystal Structures of the High-temperature Phases of Ag4Mn3O8; Z. anorg. allg. Chem. 631 (2005) 90-8

- K. M. Mogare, W. Klein, M. Jansen: Synthesis and Crystal Structure of Potassium Osmate(VIII), K2OsO5; Z. anorg. allg. Chem. 631 (2005) 468-71

- S. Ahlert, L. Diekhöner, R. Sordan, K. Kern, M. Jansen: Surface Stepp structure of Ag13OsO6, experimental evidence for Ag13 cluster building blocks; J. Chem. Soc. Chem. Commun. 1 (2004) 462-3

- J. Curda, E.-M. Peters, M. Jansen: Ein Metaarsenat(V) Anion neuartiger Konstitution in AgAsO3; Z. anorg. allg. Chem. 630 (2004) 491-94

- S. Ahlert, W. Klein, O. Jepsen, O. Gunnarsson, O. K. Andersen, M. Jansen: Ag13OsO6: A Silver Oxide with Interconnected Icosahedral Ag134+ Clusters and Dispersed [OsO6]4- Octahedra; Angew. Chem. 115 (2003) 4458-61; Angew. Chem. Int. Ed. Engl. 42 (2003) 4322-25

- M. Schreyer, M. Jansen: Synthesis and Characterization of Ag2NiO2 Showing an Uncommon Charge Distribution; Angew. Chem. 114 (2002) 665-8; Angew. Chem. Int. Ed. Engl. 41 (2002) 643-6

- W. Klein, J. Curda, K. Friese, M. Jansen: Dilead mercury chromate(VI), Pb2HgCrO6; Acta Crystallogr. C 58 (2002) 23-4

- S. Ahlert, K. Friese, M. Jansen: The Structure of Twinned Ag4Mn3O8, a Novel Octahedral Framework with a Topology Related to the Achretype cubic {10,3} Net; Z. anorg. allg. Chem. 628 (2002) 1525-31

- S. Deibele, J. Curda, E.-M. Peters, M. Jansen: Ag2HgO2: The First Silver Mercurate; Chem. Commun. 2000, 679-680

- S. Deibele, M. Jansen: Ag2BiO3: Tetravalent or Internally Disproportionated?; J. Solid State Chem. 147 (1999), 117-121

- C. Linke, M. Jansen: Subvalent Ternary Silver Oxides: Synthesis, Structural Characterization, and Physical Properties of Pentasilver Orthosilicate, Ag5SiO4; Inorg. Chem. 33 (1994) 2614-2616

- M. Jansen: Homoatomare d10-d10-Wechselwirkungen - Auswirkungen auf Struktur- und Stoffeigenschaften; Angew. Chem. 99 (1987) 1136-49; Angew. Chem. Int. Ed. Engl. 26 (1987) 1098-1110